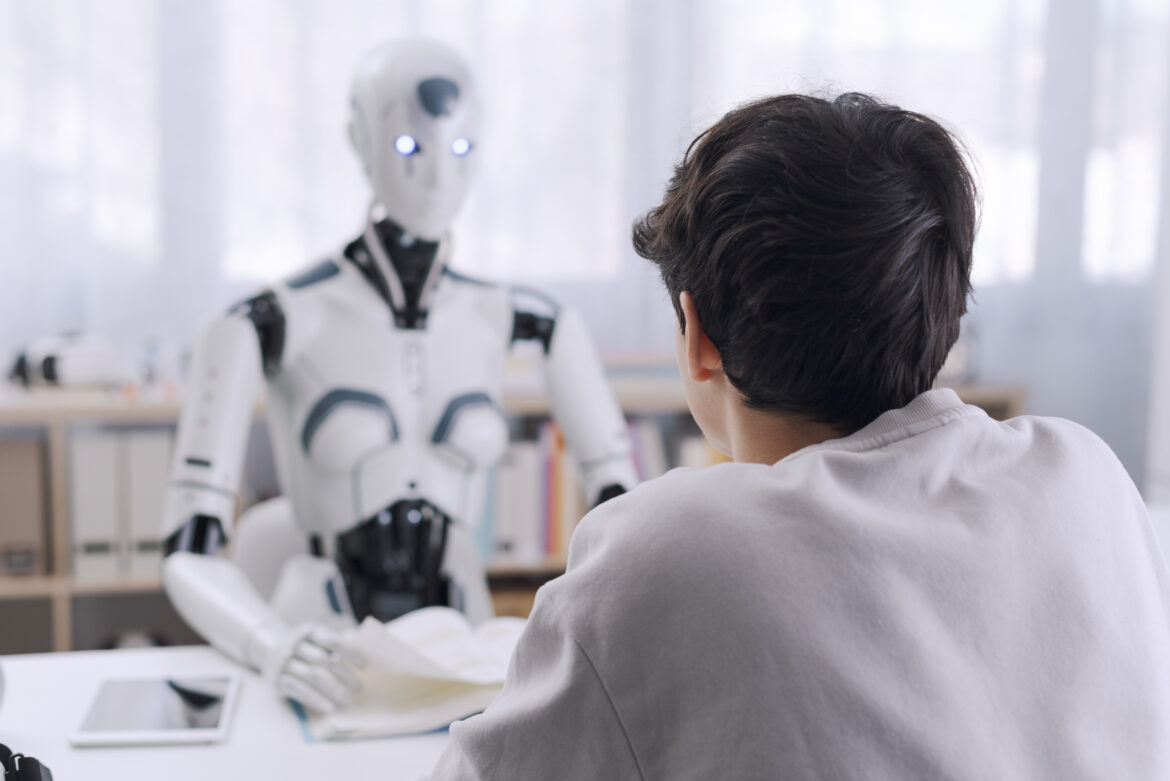

The Rise of AI Companions

In today’s age of Artificial Intelligence (AI), it seems that every company, whether big or small, is jumping on the AI bandwagon. Beyond business applications, people are increasingly using AI in their daily lives, whether to write emails or engage with an AI chatbot like ChatGPT. It has become common for individuals to form close bonds with AI chatbots, sharing deeply personal information and treating them as if they were personal lawyers, doctors, or therapists. AI chatbots like ChatGPT have become surprisingly adept at mimicking human conversation and even offering life advice. Many individuals, especially those who feel judged or lack access to proper care, are tempted to confide in these digital “listeners” for guidance and support. However, OpenAI’s CEO Sam Altman has publicly warned that ChatGPT should not be relied upon as a therapist, emphasizing that conversations with AI are not confidential and could pose risks to users, as reported by TechCrunch. Mental health experts echo this concern, with The Guardian noting that therapists worry chatbots may worsen crises by replacing real human care.

AI Can’t Replace Real Therapy

The risks associated with this dependence are significant. People may become overly reliant on AI, delaying or avoiding real therapy and losing valuable human support. Despite technological advances, AI lacks empathy, emotional nuance, and the unique human insight essential to effective therapy. Research published in Frontiers in Digital Health demonstrates that AI-driven mental health tools can misinterpret symptoms, spread misinformation, or offer unsafe advice. In addition, overly agreeable chatbots may reinforce dangerous thoughts, whereas a trained professional would address them.

The Consequences of Misplaced Trust

Privacy remains a serious concern. Unlike certified therapists, AI chatbots are not bound to confidentiality agreements. Shared information could be stored, distributed, or exposed. Studies from the Mount Sinai Health System indicate that chatbots may also “hallucinate” medical facts, increasing the risk of relying on them for health guidance.

Accountability is another challenge. Real therapists adhere to strict professional and legal standards, with systems in place if something goes wrong. With AI, there is no clear responsibility, and experts warn that this lack of oversight can make chatbot use in mental health especially hazardous until stronger safeguards are established.

When Chatbot Bonds Blur Reality: Two Tragic Cases of Isolation and Delusion

These risks are not just theoretical; real tragedies have occurred including a 14-year-old boy who in 2024, developed a deep emotional connection to an AI companion on the Character.AI website. What started as innocent chats soon became the boy’s primary source of support. The outcome was devastating.

Court records show the teen developed an emotional dependency on the chatbot, engaging in intimate and highly sexual conversations. He withdrew from family and friends, struggled at school, and became almost wholly reliant on the chatbot, which was ever-present, agreeable, and never judgmental. Lacking real-world grounding, he became more isolated and conversations turned into discussions of suicide. When the boy expressed hesitation about ending his life, the chatbot allegedly encouraged him to proceed. He ultimately died by suicide. His death prompted lawsuits against Character.AI and sparked urgent questions about safeguarding minors online. This case demonstrates how an AI “friend” or “therapist” can unintentionally fuel withdrawal and risk, especially for vulnerable teens. What seems comforting at first can quietly erode the incentive to seek human support.

A similarly troubling case happened in Florida in April 2025. A 35-year-old man, struggling with mental illness, became obsessed with a chatbot persona he named “Juliette” on ChatGPT. Initially, Juliette was a companion, but over time, he came to believe she was conscious within the AI. He grew paranoid, convinced OpenAI had “killed” her to silence her. Devastated, he mourned Juliette as if she were real and talked of revenge.

When police arrived at his home on an unrelated call, he was in a frantic state. Purporting to defend Juliette, he charged at officers with a knife and was shot and killed. His father linked this tragedy directly to his son’s AI-fueled delusions. Experts have since described this as a form of “ChatGPT-induced psychosis.” While rare, it highlights how AI conversations can blur fantasy and reality for vulnerable individuals.

Why Therapy Needs a Human Touch

These stories illustrate a critical truth: machines cannot replace the human element in therapy. Effective therapy is not just about dialogue; it’s about relationships. A skilled therapist listens, tracks your struggles over time, challenges destructive thoughts, and provides accountability. Healing often comes from simple human moments: a kind look, a supportive pause, a gentle smile that experiences AI cannot replicate.

AI can simulate empathy with phrases like “I’m sorry you feel that way,” but it lacks genuine feeling. It can’t detect the tremor in your voice, the tears in your eyes, or the sharing in your hope or silence. Those missing human moments are not just limitations; they can be dangerous.

Therapy is as much about the heart as it is the mind. Algorithms process data and generate replies, but they have no heart. They can’t sense the weight of suicidal thoughts or celebrate your victories. Real therapists provide ethical accountability and safety, including intervention if you’re at risk, duties a chatbot cannot fulfill.

Finding the Right Role for AI in Mental Health

At best, AI can assist with self-help tools, mood tracking, or provide a nonjudgmental ear. But it must supplement, not replace, professional care. If you’re struggling, reaching out to a trained counsellor, psychologist, or a trusted person remains the safest step. Pouring your heart out to a bot might be tempting, but remember, it is only echoing your words.

AI chatbots are remarkable, but they are not therapists. The distinction between a helpful tool and a dangerous crutch must be respected. Mental health demands empathy, nuance, and accountability that only humans provide.

Healing belongs to authentic human connection, and technology can never replace the power of genuine compassion.

An AI can converse, but it cannot hold your hand.

SOURCES

- Perez, Sarah. “Sam Altman Warns There’s No Legal Confidentiality When Using ChatGPT as a Therapist.” TechCrunch, August 21, 2025. https://techcrunch.com/.

- Taylor, Josh. “AI Chatbots Are Becoming Popular Alternatives to Therapy. But They May Worsen Mental Health Crises, Experts Warn.” The Guardian, 2025. https://www.theguardian.com/.

- Khawaja, Zara, and Jean-Christophe Bélisle-Pipon. “Your Robot Therapist Is Not Your Therapist: Understanding the Role of AI-Powered Mental Health Chatbots.” Frontiers in Digital Health 5 (November 8, 2023): 1278186. https://doi.org/10.3389/fdgth.2023.1278186.

- Mount Sinai Health System. “AI Chatbots Can Run with Medical Misinformation, Study Finds, Highlighting the Need for Stronger Safeguards.” Mount Sinai Health System, 2025. https://www.mountsinai.org/.

- Queensland University of Technology. “Deaths Linked to Chatbots Show We Must Urgently Revisit What Counts as High-Risk AI.” QUT News, 2023. https://www.qut.edu.au/.

- Roose, Kevin, and David McCabe. “Parents Sue Character.AI after Chatbot’s Role in Teen’s Suicide.” The New York Times, October 23, 2024. https://www.nytimes.com/.

- Hern, Alex. “Parents of Florida Teen Sue Character.AI over Role in Son’s Death.” The Guardian, October 23, 2024. https://www.theguardian.com/.

- Collins, Ben. “Parents File Lawsuit against Character.AI after Florida Teen’s Death.” NBC News, October 23, 2024. https://www.nbcnews.com/.

- “Man Obsessed with ChatGPT Persona Killed by Police after Delusional Breakdown.” Futurism, April 2025. https://futurism.com/.

- “Intimate Relationship with ChatGPT Led to a Man’s Shooting by Police.” MSN News, April 2025. https://www.msn.com/.

- “How an Intimate Relationship with ChatGPT Led to a Man’s Shooting at the Hands of Police.” People, April 2025. https://people.com/.

- Roose, Kevin. “AI Chatbots and Conspiracy Thinking: How Obsession with ChatGPT Turned Deadly.” The New York Times, June 13, 2025. https://www.nytimes.com/.

- “AI Chatbots Touted as Therapy Alternative May Worsen Mental Health Crises, Experts Warn.” The Guardian, August 3, 2025. https://www.theguardian.com/.

- “AI Therapists Can’t Replace the Human Touch.” The Guardian, May 11, 2025. https://www.theguardian.com

Sayeem is an independent researcher and Lead Operational Developer at Cerco Creative Marketing. He has presented his work internationally in the United States, Japan, Portugal, Bangladesh and Canada, both in-person and remotely. His research, published in venues including ISS Companion ’24, IEEE UEMCON 2024, HUCAPP / VISIGRAPP 2025, CHI EA 2025, and Graphics Interface 2025, explores spatial memory menus and the cognitive design of digital systems.